Two papers accepted on Learned Stream Processing at the 16th ACM International Conference on Distributed and Event-Based Systems (DEBS) 2022

Roman Heinrich and Manisha Luthra will present their work at the conference to be held from 27th June – 30th June in Copenhagen Denmark.

2022/06/20

We congratulate them on these acceptances!

Zero-Shot Cost Models for Distributed Stream Processing

Roman Heinrich, Manisha Luthra, Harald Kornmayer, Carsten Binnig

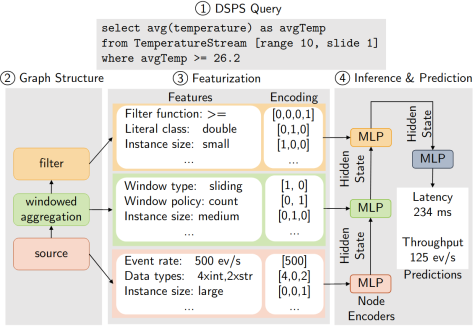

Zero-Shot cost models aim to provide accurate cost predictions on dynamic and unseen workloads of Distributed Stream Processing Systems (DSPS). A major premise of this work is that the proposed learned model can generalize to the dynamics of streaming workloads out-of-the-box. This means a model once trained can accurately predict performance metrics such as latency and throughput even if the characteristics of the data and workload or the deployment of operators to hardware changes at runtime. That way, the model can be used to solve tasks such as optimizing the placement of operators to minimize the end-to-end latency of a streaming query or maximize its throughput even under varying conditions. Our evaluation on a well-known DSPS, Apache Storm, shows that the model can predict accurately for unseen workloads and queries while generalizing across real-world benchmarks

PANDA: Performance Prediction for Parallel and Dynamic Stream Processing

Pratyush Agnihotri, Boris Koldehofe, Carsten Binnig, Manisha Luthra

PANDA focuses on selection of appropriate resources for parallel stream processing under the presence of dynamic and unseen workloads. The main idea is to predict optimal parallelism degrees for resources using zero-shot cost models for massively parallel operations of DSPS. A core challenge we aim to solve in this work is to predict when and how to scale data-parallel and task-parallel operations in DSPS to ensure the elasticity demands. Our preliminary evaluation on a widely used DSPS, Apache Flink, shows a high influence of parallelism mechanisms on the performance characteristics of DSPS for different workloads that needs to be learnt and adapted by PANDA's learned model.