Motivation

Peer review is the core of the modern academic quality control – and yet reviewing itself remains a rather informal practice that varies greatly across the fields, research communities and reviewer demographics. The lack of quality assurance, standardization and training in peer review jeopardizes the quality control and results in publication delays and dissemination of spurious results. The recent trend for openness in scientific publishing and evaluation – manifested by the growing popularity of preprint servers, open access journals and public discussion platforms – makes the need for high-quality peer reviewing even more pronounced.

Approach

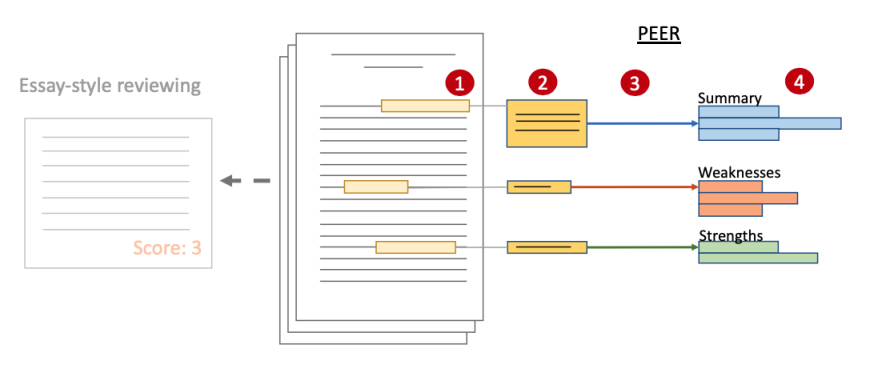

Despite the advances in scientific communication and the availability of new digital modes of interaction, peer reviewing has not evolved over the past decades: a classic peer review is an unstructured essay of arbitrary length and thoroughness, sometimes accompanied by a numerical score. We combine the existing best practices in the areas of peer reviewing, discourse theory and annotation-based collaboration to advance the peer reviewing of scientific manuscripts – and develop a dedicated writing assistance tool: PEER.

Unlike traditional, essay-style reviewing, PEER builds upon the informal annotation (1) and commenting (2) that accompany reading of the scientific manuscripts, and guides the reviewers towards authoring comprehensive and concise review reports based on the annotations they make and the reviewing schemata provided by the organizers of the reviewing campaign (4). To make the manuscript assessment more efficient, we introduce assistance models (3) that use natural language processing to help users perform routine reviewing operations without biasing their evaluation.

Team

- Prof. Dr. Iryna Gurevych (Principal Investigator)

- Dennis Zyska

- Ilia Kuznetsov

Funding

This project is funded by Deutsche Forschungsgemeinschaft (German Research Foundation).

Publications

Error on loading data

An error has occured when loading publications data from TUbiblio. Please try again later.

-

{{ year }}

-

; {{ creator.name.family }}, {{ creator.name.given }}{{ publication.title }}.

; {{ editor.name.family }}, {{ editor.name.given }} (eds.); ; {{ creator }} (Corporate Creator) ({{ publication.date.toString().substring(0,4) }}):

In: {{ publication.series }}, {{ publication.volume }}, In: {{ publication.book_title }}, In: {{ publication.publication }}, {{ publication.journal_volume}} ({{ publication.number }}), ppp. {{ publication.pagerange }}, {{ publication.place_of_pub }}, {{ publication.publisher }}, {{ publication.institution }}, {{ publication.event_title }}, {{ publication.event_location }}, {{ publication.event_dates }}, ISSN {{ publication.issn }}, e-ISSN {{ publication.eissn }}, ISBN {{ publication.isbn }}, DOI: {{ publication.doi.toString().replace('http://','').replace('https://','').replace('dx.doi.org/','').replace('doi.org/','').replace('doi.org','').replace("DOI: ", "").replace("doi:", "") }}, Official URL, {{ labels[publication.type]?labels[publication.type]:publication.type }}, {{ labels[publication.pub_sequence] }}, {{ labels[publication.doc_status] }} - […]

-

Number of items in this list: >{{ publicationsList.length }}

Only the {{publicationsList.length}} latest publications are displayed here.